Posted by John Keller

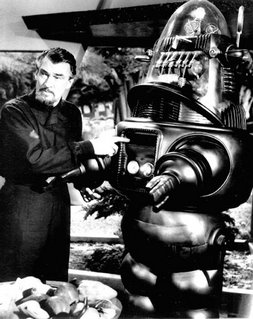

Lots of people have felt queasy about monster robots ever since they saw that cinematic classic Forbidden Planet, made half a century ago with its mechanical star Robby the Robot. The I, Robot movie with Will Smith a few years ago didn't make these folks feel much better.

I admit that it can be fun to see creepy robots at the movies, but I think it's important to acknowledge a clear difference between monster movie robots and today's generation of unmanned vehicles.

Autonomous vehicles such as the U.S. MQ-1 Predator and MQ-9 Reaper unmanned aerial vehicles can do destructive things -- like finding and killing terrorists before they can kill coalition soldiers. It doesn't necessarily follow that since these machines can do destructive things, that they post unacceptable risks to humanity.

Someone should tell this to a university professor in Sheffield, England, named Noel Sharkey, who in a keynote address to the Royal United Services Institute (RUSI) is warning of the threat posed to humanity by new robot weapons being developed by powers worldwide.

A University of Sheffield news release, headlined Killer military robots pose latest threat to humanity, lays out Professor Sharkey's case, which essentially boils down to these points:

-- we are beginning to see the first steps towards an international robot arms race;

-- robots may become standard terrorist weapons to replace the suicide bomber;

-- the United States is giving priority to robots that will decide on where, when, and whom to kill;

-- robots are easy for terrorists to copy and manufacture;

-- robots have difficulty discriminating between combatants and innocents; and

-- it's urgent for the international community to assess the risks of robot weapons.

It's pretty easy to see where this line of reasoning is going: robots can do bad things, therefore we ought to consider banning them. I just don't follow the logic. It's the same argument as the one for banning guns, which basically says that guns kill; ban guns and nobody gets killed.

That argument obviously doesn't wash. Banning guns succeeds only in disarming law-abiding citizens, and leaving them at the mercy of criminals who don't particularly care if there's a law prohibiting them from having guns or not. The bad guys get guns no matter what.

It's the same thing with autonomous vehicles. Ban them, and only the criminal terrorists will have them. Law-abiding countries would be at a disadvantage.

Let's look at Professor Sharkey's line of reasoning point by point. Let's consider that we could be in the beginning of an international robot arms race. No way to prevent this, and so what if we are? No weapon has ever been invented that wasn't put to use. Trying to prevent the spread of robot technology would be like trying to prevent the spread of the automobile. People want 'em, they'll get 'em.

Second point: robots may become terrorist weapons to replace the suicide bomber. Let's remember that Muslim suicide bombers get 72 virgins in heaven if they succeed in blowing themselves up. I doubt if they'll want to share those virgins with robots.

Next point: the U.S. is giving priority to robots that will decide on where, when, and whom to kill. Little chance of this. The so-called doctrine of "man in the loop" is part of the fabric of military procedures. People make the kill decisions, not the robots. Besides, everyone these days is scared to death of lawsuits. No robot manufacturer or user would allow his machines to have sole control over life-and-death decisions.

How about the claim that robots have difficulty discriminating between combatants and innocents? See paragraph above.

Let's consider that robots are easy for terrorists to copy and manufacture. Okay, so are guns, knives, personal computers, and can openers. Look what the terrorists have done with improvised explosive devices. They're pretty good at it, but U.S. forces and their allies are dealing with this threat. Plus, anything the terrorists build, we can build better. Let the robot races begin!

Last point: it's urgent for the international community to assess the risks of robot weapons. Get the U.N. involved in autonomous vehicle policy? No, thanks. I spent 10 years as a reporter in Washington watching the federal government screw up nearly everything it touched. The U.N. is even worse.

With apologies to Professor Sharkey, I think we should tend to our robots, and let the other guys tend to theirs.

I can hear the sounds of gears and miniature motors turning as I read the article. May the robots live on !

ReplyDeleteDavid Brockman, Utilytech Corp